Imagine a world where machines think, adapt, and learn—capabilities once believed to be the exclusive domain of humans, and often portrayed in sci-fi movies. This reality is quickly becoming our new normal, thanks to the rise of artificial intelligence (AI). At the heart of this modern AI development are neural networks, a key type of machine learning model that plays a crucial role in solving problems that traditional computers typically struggle with, specifically excelling in tasks that require pattern recognition, classification, and predictions.

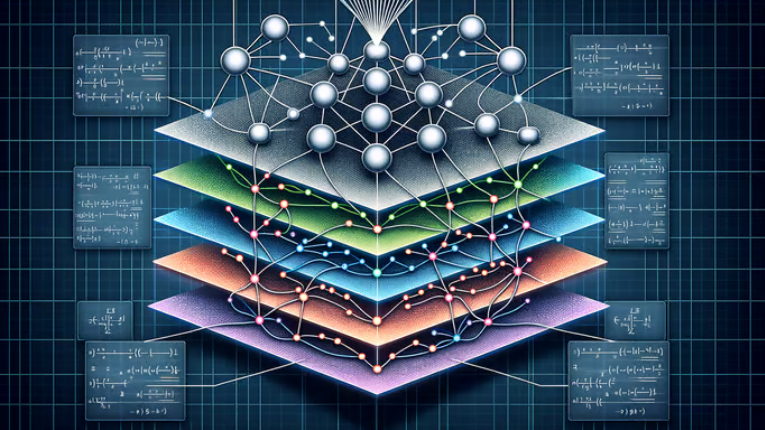

Neural networks converge the fields of neuroscience and computer science, relying heavily on our knowledge of the brain’s functionality and mapping this knowledge onto software systems. The structure of neural networks mimics the human brain when processing information. Both the neural networks and the brain are composed of a series of interconnected neurons organized among various layers. The basic operations of these neurons revolve around summing the values of any given inputted data and applying weights to each of those sums. The weights themselves allow the neural network to detect abstract, nonlinear features and patterns in the data. This detection of nonlinearity distinguishes the ability of neural networks to solve problems conventional computers struggle with. After the general neural network structure is established, it must be trained, typically on a massive, diverse dataset that allows the system to understand and learn new information. During training, the neural network constantly passes data through its network, adjusting its weights among various neurons along the way. As neural networks are trained over longer periods of time, they typically become much more accurate and robust.

The majority of modern neural networks are known as deep neural networks (DNNs), an advanced neural network that has significantly more layers and neurons than conventional neural networks. Consequently, as deep neural networks become larger, although they become more complex, they require more resources and time to train. In order to address this resource consumption, a new type of neural network aims to replicate the brain’s energy efficiency—spiking neural networks (SNNs). This brain-based model computes operations using signals that are represented as spikes rather than numerical values. Additionally, SNNs utilize much more sparse neurons that rely on event-driven computations, essentially only computing operations when necessary. This greatly reduces the energy consumption of SNNs, usually by a few orders of magnitude, in their training phase when compared to modern DNNs. Today, SNNs have often been used for medical purposes due to their strengths in pattern recognition and brain-like learning capabilities. For example, last year, researchers from Eindhoven University of Technology and Northwestern University developed a method of quickly identifying diseases using neuromorphic chips, an SNN-based hardware chip, first testing its ability to identify cystic fibrosis. In the past, chips were trained using external software and datasets which was both time-consuming and inefficient. To optimize this process, the researchers created a chip that processes patient data in real-time, revolutionizing the use of this technology in real-world applications. When this neuromorphic chip was tested to identify high concentrations of chloride anions in sweat, a clear indicator of cystic fibrosis, it was primarily successful, continuously improving with each mistake it made. This opens a new door into rapid learning approaches which is a technological advancement that shifts pre-programmed technology to artificial intelligence that can learn and adapt to their environment.

Neural networks have profoundly shaped the evolution of AI, proving to be indispensable tools that can solve complex problems previously only achievable by humans. In the past, AI has been used to defeat world-class champions in games such as Go or Chess and in the modern day, AI is implemented nearly everywhere, ranging from the personalized advertisements you see on Amazon to the self-driving vehicles currently rising in popularity. By drawing inspiration from the brain’s neural structure, neural networks bring the strengths of the human brain to a broader scale and enable systems capable of learning and adapting over time. As research in neuroscience deepens, novel methods of designing neural networks, such as SNNs, may allow energy-efficient solutions as well. These developments are likely to extend the capabilities of modern AI, pushing the boundaries of what intelligent systems can achieve without sacrificing the sustainability of modern technology.